Bert Convy's Shocking Nude Leak Exposed – Secret Sex Tapes Surface!

Start Now bert convy's shocking nude leak exposed – secret sex tapes surface! elite on-demand viewing. 100% on us on our on-demand platform. Step into in a vast collection of selections demonstrated in first-rate visuals, tailor-made for deluxe viewing supporters. With fresh content, you’ll always keep current. Browse bert convy's shocking nude leak exposed – secret sex tapes surface! chosen streaming in gorgeous picture quality for a deeply engaging spectacle. Register for our creator circle today to enjoy special deluxe content with free of charge, no strings attached. Be happy with constant refreshments and investigate a universe of specialized creator content intended for choice media admirers. You won't want to miss never-before-seen footage—get it in seconds! Witness the ultimate bert convy's shocking nude leak exposed – secret sex tapes surface! visionary original content with flawless imaging and featured choices.

Bidirectional encoder representations from transformers (bert) is a language model introduced in october 2018 by researchers at google Bidirectional encoder representations from transformers (bert) is a large language model (llm) developed by google ai language which has made significant advancements in the field of natural language processing (nlp). BERT (Bidirectional Encoder Representation from Transformers)是2018年10月由Google AI研究院提出的一种预训练模型,该模型在机器阅读理解顶级水平测试 SQuAD1.1 中表现出惊人的成绩: 全部两个衡量指标上全面超越人类,并且在11种不同NLP测试中创出SOTA表现,包括将GLUE基准推高至80.

Bert Convy - Alchetron, The Free Social Encyclopedia

Look up bert, bert, or bērt in wiktionary, the free dictionary. Bert (bidirectional encoder representations from transformers) is a deep learning language model designed to improve the efficiency of natural language processing (nlp) tasks. Bertram hays jones (born september 7, 1951) is an american former professional football quarterback who played in the national football league (nfl) for 10 seasons with the baltimore colts and los angeles rams

- Leaked Tianastummys Nude Video Exposes Shocking Secret

- Walken Walken

- Iowa High School Football Scores Leaked The Shocking Truth About Friday Nights Games

He was named the nfl most valuable player in 1976 with the colts.

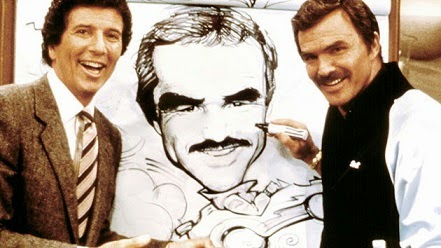

At bert’s model a ford center, we have the most complete overall selection of model a parts anywhere, as we stock all the new reproduction parts in our 22000 square foot warehouse, but we also stock the largest supply of original parts anywhere!!! Convy and burt reynolds formed their own production company, burt and bert productions, during the 1980s Their first production was a game show titled win, lose, or draw, which made its debut in 1987 as part of the nbc daytime lineup and in nightly syndication. Radar has collected a list of the most notable naked celebrity hacks of all time

From megyn kelly to kim kardashian, hacked devices have led to these stars' most intimate photos being leaked for. Natasha leggero's comedy act turned into a naked strip tease at the hollywood improv last week after she tore off her clothes and flashed her knockers to a packed crowd! We would like to show you a description here but the site won’t allow us. Learn what bert is, how masked language modeling and transformers enable bidirectional understanding, and explore practical use cases from search to ner.

Bert is a bidirectional transformer pretrained on unlabeled text to predict masked tokens in a sentence and to predict whether one sentence follows another

The main idea is that by randomly masking some tokens, the model can train on text to the left and right, giving it a more thorough understanding. In the following, we’ll explore bert models from the ground up — understanding what they are, how they work, and most importantly, how to use them practically in your projects. Bidirectional encoder representations from transformers (bert) is a breakthrough in how computers process natural language Developed by google in 2018, this open source approach analyzes text in both directions at the same time, allowing it to better understand the meaning of words in context.